In typical hospital situations, doctors try to diagnose a cancer by first looking at reports of imaging tests such as the CT Scan or an MRI. And this is followed up with a microscopic analysis of the affected tissue sample, or a biopsy. Though invasive, biopsies help to conclusively determine a cancerous growth. But the process is both stressful and expensive for the patient.

Researchers at IIIT Hyderabad have developed a technique that helps in the automatic diagnosis and prognosis of certain types of cancers.

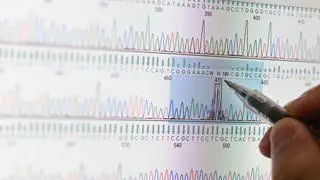

The method harnesses the power of computer vision and deep learning networks. The researchers have demonstrated that by analysing digital images of biopsies of patients with cancer of the kidney minutely, it is possible to diagnose and also predict survival outcomes.

Fast, objective assessment

The IIIT team led by PK Vinod from the Centre for Computational Natural Sciences & Bioinformatics (CCNS&B) collaborated with CV Jawahar from the Centre for Visual Information Technology for the image analysis of tissue samples.

The aim was to see how a computer can interpret digital images of the biopsies which contain markers of disease progression and other key information that can lead to diagnosis and prediction, explains Vinod.

A manual microscopic analysis takes considerable amount of effort and time. In treating cancer, time is of the essence and machines speed up the process. Machines also helps when opinions differ. “When opinions are divided, there is a lot of subjectivity and it varies from pathologist to pathologist. A computer-aided interpretation could help in resolving these sorts of issues by aiding and not replacing the pathologist,” says Vinod.

The research focused on kidney cancer because it’s among the most common in men and woman. With the help of convolutional neural networks, they were able to distinguish renal cancers from normal tissue. Next, the model automatically classified the renal cancer subtypes. Finally, it was possible to identify features that could predict survival outcomes.

While the model achieved over 90 per cent accuracy in determining whether the histopathological images reflected a tumour, it achieved a 94 per cent accuracy in determining the subtypes of cancer. Survival prediction was done based not just on nuclei features but also on tumour shapes. The tissue of origin was also important. “When we take a tissue sample for biopsy, we know where we’re taking it from but we’re not sure of the site of origin. We were able to show that there is site of origin difference between renal cancers,” he says, adding that the model could generate a risk index based on these factors.

Digital images for the study were obtained from the Cancer Genome Atlas Project, a project funded by the US Government that catalogued and characterised 33 different types of cancers using genome sequencing.

The team now plans on expanding the scope of its research by working with Indian cancer data sets, beginning with lung cancer which is most prevalent.

This, however, is challenging as digital images of tissue samples are simply not available. Researchers are in talks with pathologists at various hospitals in Hyderabad to popularise scanning and digitising microscopic slides. “At the institute-level too, we want to aggressively push in this direction by hiring post-doctoral students to this project,” says Vinod.

The institute proposes to build a digital repository of histopathological images of cancer patients in India. Commercial scale application of the technique is in the future. At present, the focus is on assisting the pathologist and establishing the reliability and diversifying its application to other cancers that are common in India, Vinod says.

Comments

Comments have to be in English, and in full sentences. They cannot be abusive or personal. Please abide by our community guidelines for posting your comments.

We have migrated to a new commenting platform. If you are already a registered user of TheHindu Businessline and logged in, you may continue to engage with our articles. If you do not have an account please register and login to post comments. Users can access their older comments by logging into their accounts on Vuukle.