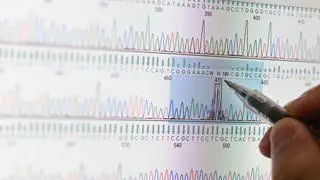

From picking up signals on breast or lung cancer in individuals without an invasive test to helping cut short the time in developing a drug, AI (artificial intelligence) features ever so frequently in the healthcare landscape.

The technology is growing at a dynamic pace and it sometimes traverses uncharted territory involving transactional benefits, amplification of misinformation and other ethical considerations—sensitive issues that have the attention of health administrators.

In fact, it’s like running down a rabbit hole—strewn with beneficial, complex or risky outcomes, and the more one ventures down that pathway, more challenges unfold.

Less than a fortnight ago, the World Health Organization, while recognising the benefits of AI use in health, especially in low-resource countries, also sounded a note of caution. AI technologies, including large language models, “are being rapidly deployed, sometimes without a full understanding of how they may perform, which could either benefit or harm end-users, including health-care professionals and patients. When using health data, AI systems could have access to sensitive personal information, necessitating robust legal and regulatory frameworks for safeguarding privacy, security and integrity,” it said, outlining features to guide countries looking to regulate AI use in health.

In India, the segment is broadly covered by digital regulatory frameworks involving data use, consent and health technology assessments (HTA), say AI industry representatives. But there is a need for refining and strengthening guidelines, they say, without however stifling the research.

Dr Shibu Vijayan, Medical Director (Global Health), Qure.ai, says there is a broad vision on harnessing benefits of the technology to deliver health, as seen in the various digital initiatives outlined by the authorities. The need is for granular details and checks and balances, in patient-interest, he says. Ahead of adoption, there is an evaluation of the technology through HTAs, for example. Robust guidelines are needed at the research level, for example, to strengthen firewalls to protect patient details from getting breached, he explains.

The Indian Council of Medical Research had outlined ethical guidelines for AI in healthcare research, earlier this year. Robust guidelines keep out unregulated players, says Vijayan, as one bad experience is enough to tarnish the good work done by the rest. Evaluators also need to be trained, he says, as technology constantly evolves.

There are multiple aspects involving the adoption of AI, its implementation, the care pathway for the patient screened by this tech — “a clinician should always be in the loop,” he says. Technology can assist the clinician triage and prioritise a person whose x-ray may be showing a cancerous nodule, for example, he explains.

Qure.ai recently collaborated with AstraZeneca and the Karnataka government to screen for 29 lung ailments including cancer from one x-ray; last month it teamed-up with PATH India for Maharashtra, to improve TB and Covid-19 screening using its AI-powered software.

Local solutions

Internationally, health regulators are evaluating health-tech and grappling with its challenges, observes Kalyan Sivasailam, Founder and Chief Executive of 5C Network. A strong advocate of local solutions, especially for low-resource settings, he says, home-grown firms are able to understand the ground-realities better and develop solutions.

Responding to concerns on the proliferating health-tech ventures and their predictive therapeutic claims, he says, there is a template to assess health-tech companies. Although, it is presently voluntary.

5C Network is a Tata1MG-backed health-tech start-up involved with radiology interpretation. And their AI-driven therapeutic interpretations assist a radiologist in prioritising patients needing urgent attention, he explains.

In its guidance, the WHO had put the regulatory spotlight on the need for transparency and documentation, validation and quality of data, expressly stating intended use, consent, data protection and privacy, keeping out bias, protecting against cyber threats. It called for a collaborative approach to assess and test these products before they were deployed.

Siddharth Shah, Senior Manager (Healthcare Advisory) with MarketsandMarkets, agrees that broad policy frameworks governing digital and health ventures are in place. The concern involves implementation, he says, as there are nuances on sharing data, consent and defining the transactional benefit to the individual, for example.

Individual consent is crucial for sharing data, but challenges emerge given the multiple languages, literacy, etc. Also, is a health-tech company allowed to commercially use data shared by a patient with a hospital? Is that a breach of contract or will benefits trickle back to the patient?

The AI rabbit hole throws up many questions, but industry-voices are optimistic they are getting addressed.

Comments

Comments have to be in English, and in full sentences. They cannot be abusive or personal. Please abide by our community guidelines for posting your comments.

We have migrated to a new commenting platform. If you are already a registered user of TheHindu Businessline and logged in, you may continue to engage with our articles. If you do not have an account please register and login to post comments. Users can access their older comments by logging into their accounts on Vuukle.